In 2013, if you said “we don’t need DevOps,” you were already behind.

In 2026, saying “we’ll figure out LLMOps later” will sound the same.

Every generation of software invents the operational discipline it needs.

Monoliths gave us release engineering.

Microservices gave us DevOps.

AI-native systems are giving us Large language model operations.

This isn’t about adding another layer of tooling.

It’s about retaining control.

Because LLM-driven products don’t just scale traffic.

They scale behavior.

And behavior, when left ungoverned, doesn’t fail loudly.

- It drifts

- It compounds cost

- It erodes trust

That’s the shift most teams are underestimating.

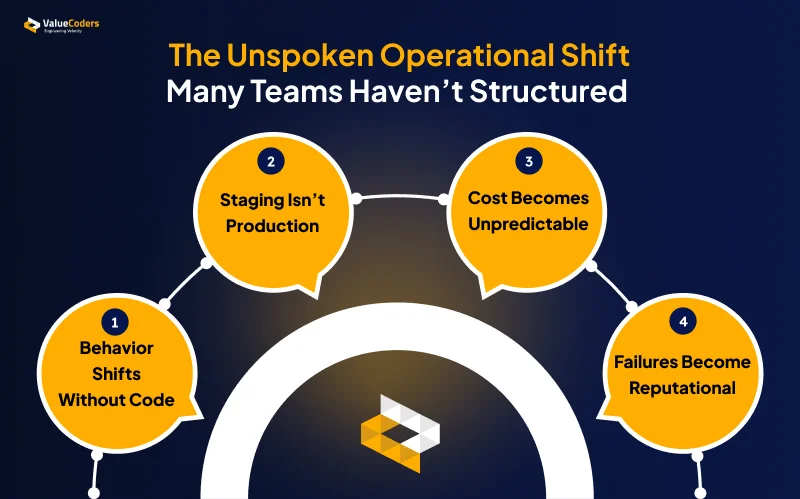

The Quiet Operational Shift Most Teams Haven’t Formalized

AI adoption didn’t start as an operational problem.

It started as a capability advantage.

- Faster content generation.

- Smarter workflows.

- Embedded copilots.

But as AI features moved from experimentation to revenue-critical infrastructure, the rules changed.

1. Behavior Changes Without Code Changes

In LLM-driven systems:

- Model providers update weights

- Retrieval data changes

- Prompts get tweaked

- User inputs evolve

The code stays the same.

The behavior doesn’t.

Traditional DevOps pipelines are blind to this.

2. “Works in Staging” Stops Meaning Anything

For deterministic systems:

- Test case passes → deployment confidence

For LLM systems:

- Same prompt

- Same model

- Different outputs

You cannot rely on traditional pass/fail testing.

Without structured evaluation pipelines, regressions ship silently.

3. Cost Becomes Unpredictable

AI systems introduce:

- Token-based economics

- Feature-level cost variability

- Usage-driven scaling

Without semantic observability:

- Finance sees surprises

- Margins compress

- Optimization becomes reactive

This is where the LLMOps vs DevOps distinction becomes operational, not theoretical.

4. Failures Become Reputational, Not Technical

When AI fails:

- It answers confidently but incorrectly

- It generates unsafe output

- It produces inconsistent responses

- It degrades trust gradually

These are not 500 errors. They are credibility leaks.

AI systems need operational discipline beyond infrastructure. Build governed large language model operations before scale exposes the gaps.

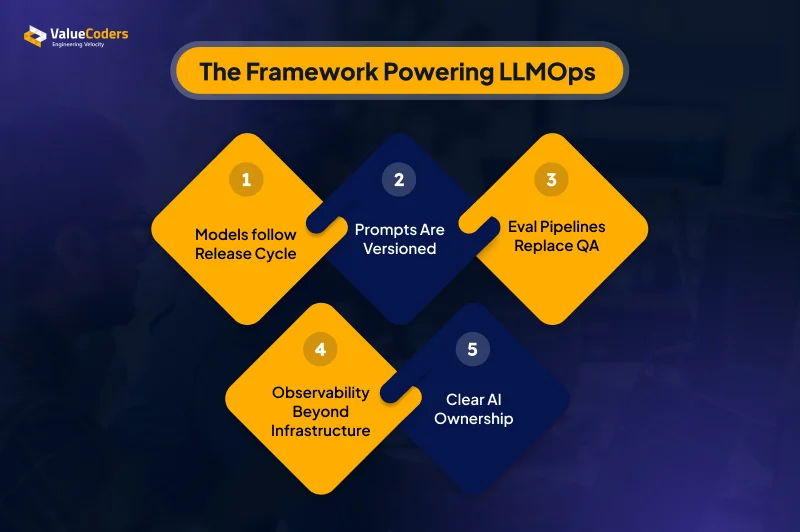

The Operating Model Behind Large Language Model Operations

LLMOps is not a platform.

It’s not a dashboard.

It is the operating layer that governs model behavior the same way DevOps governs infrastructure.

1. Model Lifecycle Is Treated Like a Release Cycle

Model changes are not silent upgrades.

They require:

- Controlled evaluation before rollout

- Side-by-side benchmarking of old vs new models

- Rollback strategy defined in advance

- Clear ownership of upgrade decisions

Model selection becomes an engineering decision, not a vendor announcement you react to.

AI Development Services in 2026 will be judged by how well they operationalize AI, not how flashy their demos look.

2. Prompts Are Versioned Like Source Code

In mature LLM production workflows:

- Prompts live in repositories

- Changes go through review

- Behavioral diffs are tracked

- Rollbacks are possible

- Canary releases validate impact

A prompt change is a behavioral change.

It must be governed accordingly.

Large Language Model Development Services that ignore this will struggle to scale enterprise-grade systems.

3. Evaluation Pipelines Replace Traditional QA

Manual testing is not enough for probabilistic systems.

Instead, teams implement:

- Golden datasets per feature

- Automated semantic scoring

- Regression checks before release

- Continuous production-level evaluation

If behavior drifts, the system detects it.

Not the customer.

Also Read: Top 10 LLM Development Companies in 2026

4. Observability Expands Beyond Infrastructure

Infra dashboards show:

- CPU

- Memory

- Latency

- Error rates

LLMOps dashboards show:

- Token spend per feature

- Hallucination frequency

- Output consistency over time

- User correction signals

- Drift by customer segment

This is behavioral telemetry.

Without it, AI becomes opaque.

5. AI Has a Defined Owner

LLMOps requires someone who:

- Prioritizes AI work weekly

- Owns behavioral KPIs

- Approves model upgrades

- Is accountable for drift

Without ownership, AI systems decay.

Just like infrastructure did before DevOps.

Move beyond experimentation with structured LLM production workflows, behavioral observability, and controlled model governance.

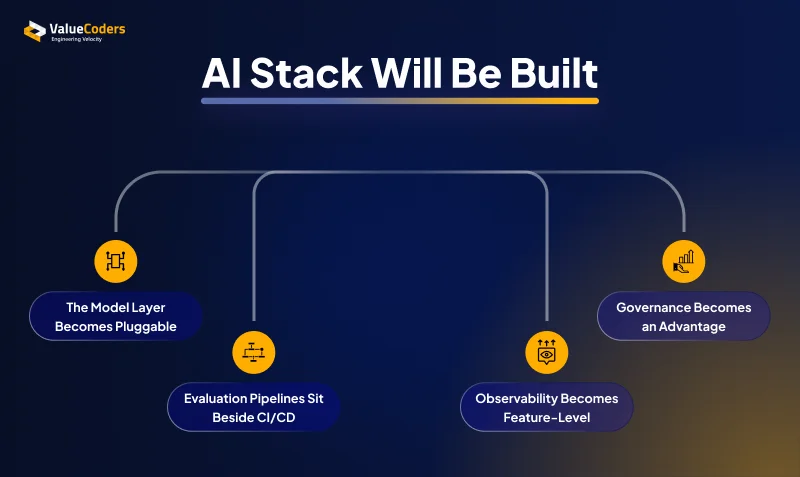

How the 2026 AI Product Stack Will Be Built Differently

LLMOps doesn’t just add processes.

It reshapes architecture.

AI-native systems in 2026 will not look like today’s “LLM + wrapper” products.

They will be structured around behavioral control.

1. The Model Layer Becomes Pluggable

Today:

- One primary model

- Hardcoded integration

- Occasional upgrades

By 2026:

- Multiple models per workflow

- Runtime routing based on:

- Cost thresholds

- Task complexity

- Regional compliance

- Latency SLAs

- Continuous benchmarking

Model choice becomes dynamic infrastructure.

Enterprise LLM deployment will require flexibility, not vendor lock-in.

2. Evaluation Pipelines Sit Beside CI/CD

Traditional CI/CD validates code.

In 2026, release pipelines will also validate behavior.

That includes:

- Automated semantic regression tests

- Model comparison before release

- Feature-level scoring thresholds

- Release blocking on behavioral degradation

If evaluation fails, deployment fails.

Behavior becomes part of the release gate.

3. Observability Becomes Feature-Level

In scaled environments:

- Product monitors accuracy

- Finance monitors token economics

- Compliance monitors output risk

- Engineering monitors stability

AI telemetry integrates into core operating dashboards.

Also Read: Choosing the Right LLM: What Your Business Needs

4. Governance Becomes a Competitive Advantage

In enterprise markets:

- Buyers will ask about model governance

- Procurement will demand audit logs

- Security teams will review AI pipelines

- Compliance will inspect prompt management

Top LLM Developers in 2026 won’t just optimize accuracy, they’ll optimize operational maturity.

Teams without it will stall in review cycles.

Strategic Implications: How AI Products Will Be Built & Run in 2026

By 2026, AI capability will be commoditized.

Operational maturity will not.

The differentiator won’t be who has access to better models.

It will be who can govern them predictably.

1. AI Products Will Be Designed With Feedback Loops by Default

AI systems will no longer be:

- Static feature releases

- “Ship and observe” experiments

They will be built with:

- Behavioral telemetry embedded from day one

- Continuous evaluation pipelines

- Structured drift detection

- User correction feedback loops

Behavior becomes measurable infrastructure.

2. Continuous Semantic Evaluation Becomes Standard Practice

Just as CI/CD became non-negotiable:

- Automated regression scoring will block releases

- Model upgrades will require benchmarking

- Prompt changes will require validation

- Safety thresholds will be enforced programmatically

Manual spot-checking won’t survive enterprise scale.

3. AI Observability Becomes Cross-Functional

By 2026:

- Product tracks feature accuracy

- Finance tracks token efficiency

- Compliance tracks output risk

- Engineering tracks behavioral stability

LLMOps integrates AI into core operational dashboards.

Not innovation dashboards.

Also Read: How Golang and LLM Together Lead to AI Innovation?

4. Model Governance Becomes a Board-Level Topic

As AI systems influence revenue and risk:

- Model lifecycle decisions affect enterprise deals

- Governance posture affects procurement cycles

- Auditability affects regulatory approvals

AI maturity becomes part of strategic positioning.

Not just engineering depth.

5. The Real Competitive Divide

Two companies will both claim “AI-powered.”

One will:

- Ship fast

- Debug reactively

- Discover drift late

- Control cost poorly

The other will:

- Instrument behavior

- Govern upgrades

- Predict cost

- Pass enterprise scrutiny

Both build AI.

Only one runs it responsibly.

That’s the LLMOps divide.

Operationalize large language model operations with evaluation pipelines and behavioral observability built in.

How ValueCoders Helps Product Teams Build the Right Foundations

ValueCoders works with Tech Product Companies, GCC engineering arms, and modernisation-focused enterprises to operationalize AI systems with discipline.

That includes:

- Designing governed LLM production workflows

- Implementing evaluation pipelines from day one

- Structuring enterprise LLM deployment with auditability

- Embedding semantic observability into delivery

- Aligning AI features to measurable outcomes

For scaling organizations, our Support models include:

- Staff augmentation — embedding experienced LLM engineers into existing governance structures

- Dedicated AI Pods — outcome-driven teams aligned to roadmap milestones

- Orchestrated delivery models — where AI workflows integrate with DevOps, QA, and platform engineering

- Long-term Run mode support — ensuring behavioral stability post-launch

Final Thoughts

AI capability is accelerating. Operational maturity is not.

In the coming years, the advantage won’t come from better models alone, it will come from running them with discipline, predictability, and control.

DevOps became standard when system complexity demanded structure. AI systems are reaching that same point.

As LLMs move into revenue-critical workflows, governance and observability will shift from optional improvements to baseline expectations.

LLMOps vs DevOps is not about replacing DevOps.

It’s about extending operational discipline into the behavioral layer.

By 2026, large language model operations will not feel new.

They will simply define how serious AI products are built and run.